There may be no more blatant example of the disconnect between the software engineering community and the practice of programming than the lack of widespread recognition for the fundamental role of loop invariants.

Let’s recall the basics, as they are taught in the fourth week or so of the ETH introductory programming course [1], from the very moment the course introduces loops. A loop is a mechanism to compute a result by successive approximations. To describe the current approximation, there is a loop invariant. The invariant must be:

- Weak enough that we can easily ensure it on a subset, possibly trivial, of our data set. (“Easily” means than this task is substantially easier than the full problem we are trying to solve.)

- Versatile enough that if it holds on some subset of the data we can easily (in the same sense) make it hold on a larger subset — even if only slightly larger.

- Strong enough that, when it covers the entire data, it yields the result we seek.

As a simple example, assume we seek the maximum of an array a of numbers, indexed from 1. The invariant states that Result is the maximum of the array slice from 1 to i. Indeed:

- We can trivially obtain the invariant by setting Result to be a [1]. (It is then the maximum of the slice a [1..1].)

- If the invariant holds, we can extend it to a slightly larger slice — larger by just one element — by increasing i by 1 and updating Result to be the greater of the previous Result and the element a [i] (for the new i).

- When the slice covers the entire array — that is, i = n — the invariant tells us that Result is the maximum of the slice a [1..n], giving us the result we seek.

You cannot understand the corresponding program text

from

i := 1; Result := a [1]

until i = n loop

i := i + 1

if Result < a [i] then Result := a [i] end

end

without understanding the loop invariant. That is true even of people who have never heard the term: they will somehow form a mental image of the intermediate situation that justifies the algorithm. With the formal notion, the reasoning becomes precise and checkable. The difference is the same as between a builder who has no notion of theory, and one who has learned the laws of mechanics and construction engineering.

As another example, take Levenshtein distance (also known as edit distance). It is the shortest sequence of operations (insert, delete or replace a character) that will transform a string into another. The algorithm (a form of dynamic programming) fills in a matrix top to bottom and left to right, each entry being one plus the maximum of the three neighboring ones to the top and left, except if the corresponding characters in the strings are the same, in which case it keeps the top-left neighbor’s value. The basic operation in the loop body reads

if source [i] = target [j] then

dist [i, j] := dist [i -1, j -1]

else

dist [i, j] := min (dist [i, j-1], dist [i-1, j-1], dist [i-1, j]) + 1

end

You can run this and see it work, filling the array cell after cell, then delivering the result at (dist [M, N] (the bottom-right entry, M and i being the lengths of the source and target strings. Or just watch the animation on page 60 of [2]. It works, but why it works remains a total mystery until someone tells you the invariant:

Every value of dist filled so far is the minimum distance from the initial substrings of the source, containing characters at position 1 to p, to the initial substring of the target, positions 1 to q.

This is the rationale for the above code: we want to compute the next value, at position [i, j]; if the corresponding characters in the source and target are the same, no operation is needed to extend the result we had in the top-left neighbor (position [i-1, j-1]); if not, the best we can do is the minimum we can get by extending the results obtained for our three neighbors: through the insertion of source [i] if the minimum comes from the neighbor to the left, [i-1, j]; through the deletion of target [j] if it comes from the neighbor above; or through a replacement if from the top-left neighbor.

With this explanation, a mysterious, almost hermetic algorithm instantly becomes crystal-clear.

Yet another example is in-place linked list reversal. The body of the loop is a pointer ballet:

temp := previous

previous := next

next := next.right

previous.put_right (temp)

with proper initialization (set next to the value of first and previous to Void) and finalization (set first to the value of previous). This is not the only possible implementation, but all variants of the algorithm use a very similar scheme.

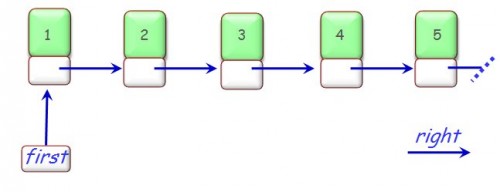

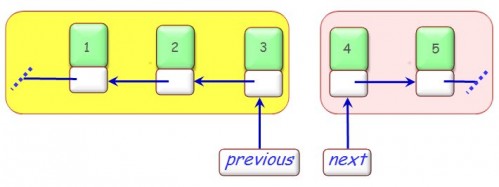

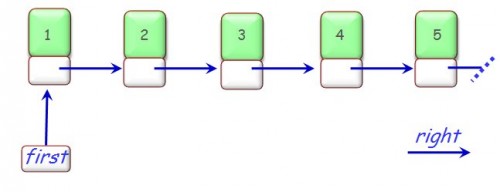

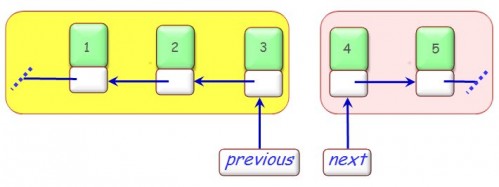

The code looks again pretty abstruse, and hard to get right if you do not remember it exactly. As in the other examples, the only way to understand it is to see the invariant, describing the intermediate assumption after a typical loop iteration. If the original situation was this:

List reversal: initial state

then after a few iterations the algorithm yields this intermediate situation:

List reversal: intermediate state

The figure illustrates the invariant:

Starting from previous and repeatedly following right links yields the elements of some initial part of the list, but in the reverse of their original order; starting from next and following right links yields the remaining elements, in their original order.

Then it is clear what the loop body with its pointer ballet is about: it moves by one position to the right the boundary between the two parts, making sure that the invariant holds again in the new state, with one more element in the first (yellow) part and one fewer in the second (pink) part. At the end the second part will be empty and the first part will encompass all elements, so that (after resetting first to the value of previous) we get the desired result.

This example is particularly interesting because list reversal is a standard interview questions for programmers seeking a job; as a result, dozens of pages around the Web helpfully present algorithms for the benefit of job candidates. I ran a search on “List reversal algorithm” [3], which yields many such pages. It is astounding to see that from the first fifteen hits or so, which include pages from programming courses at both Stanford and MIT, not a single one mentions invariants, or (even without using the word) gives the above explanation. The situation is all the more bizarre that many of these pages — read them for yourself! — go into intricate details about variants of the pointer manipulations. There are essentially no correctness arguments.

If you go a bit further down the search results, you will find some papers that do reference invariants, but here is the catch: rather than programming or algorithms papers, they are papers about software verification, such as one by Richard Bornat which uses a low-level (C) version of the example to illustrate separation logic [4]. These are good papers but they are completely distinct from those directed at ordinary programmers, who simply wish to learn a basic algorithm, understand it in depth, and remember it on the day of the interview and beyond.

This chasm is wrong. Software verification techniques are not just good for the small phalanx of experts interested in formal proofs. The basic ideas have potential applications to the daily business of programming, as the practice of Eiffel has shown (this is the concept of “Verification As a Matter Of Course” briefly discussed in an earlier post [5]). Absurdly, the majority of programmers do not know them.

It’s not that they cannot do their job: somehow they eke out good enough results, most of the time. After all, the European cathedrals of the middle ages were built without the benefit of sophisticated mathematical models, and they still stand. But today we would not hire a construction engineer who had not studied the appropriate mathematical techniques. Why should we make things different for software engineering, and deprive practitioners from the benefits of solid, well-accepted theory?

As a modest first step, there is no excuse, ever, for publishing a loop without the basic evidence of its adequacy: the loop invariant.

References

[1] Bertrand Meyer: Touch of Class: Learning to Program Well, Using Objects and Contracts, Springer, 2009. See course page (English version) here.

[2] Course slides on control structures, here in PowerPoint (or here in PDF, without the animation); see example starting on page 51, particularly the animation on page 54. More recent version in German here (and in PDF here), animation on page 60.

[3] For balance I ran the search using Qrobe, which combines results from Ask, Bing and Google.

[4] Richard Bornat, Proving Pointer Programs in Hoare Logic, in MPC ’00, 5th International Conference on Mathematics of Program Construction, 2000, available here.

[5] Bertrand Meyer, Verification as a Matter of Course, a post on this blog.

VN:F [1.9.10_1130]

Rating: 10.0/10 (5 votes cast)

VN:F [1.9.10_1130]

Rating: +4 (from 4 votes)

Informatics Europe

Informatics Europe LASER summer school

LASER summer school Propulsion Academy

Propulsion Academy